So today I am really inspired to write something about game theory in Mathematics, a very much thoroughly researched subject by mathematicians for a very long time, yet are so interesting for even beginners to the field. I would like to review some of the most basic definitions of game theories that I have learned as a student, followed by the review of common categories of games.

We start first with the primitive definition of game theory:

“Game theory is the study of mathematical models of strategic interaction among rational decision-makers.”

As you can see, this definition implemented that game theory is not just limited to the study of games and its mechanics, although this would later be an important part of crafting out the theories. The field focuses more heavily on strategies and logical reasoning, applying its results to model different types of behaviors and outcomes that can occur in real-life situations. This leads to an expansion in the definition of this term as the study of logical thinking among all individuals, but these expansions involve studies of other fields, which we will not focus on today.

The question is: Why games?

If we consider games in the context of plays decided by skill, strength, or luck as in “football game”, this indeed can be frustrating. But if we consider the following definition of a game:

“A form of competitive activity played according to rules.”

then things are suddenly much more clear: any given conflict can actually be generalized into a game. The factors needed to form a game – players, rules, competitions – are already present in the conflict itself. Therefore, it is often in the best interests of mathematicians to research and model these situations in the form of games, as in those forms the numbers are much more easy to manage in the lack of context.

We will proceed to a classic example of a simplified real-life situation:

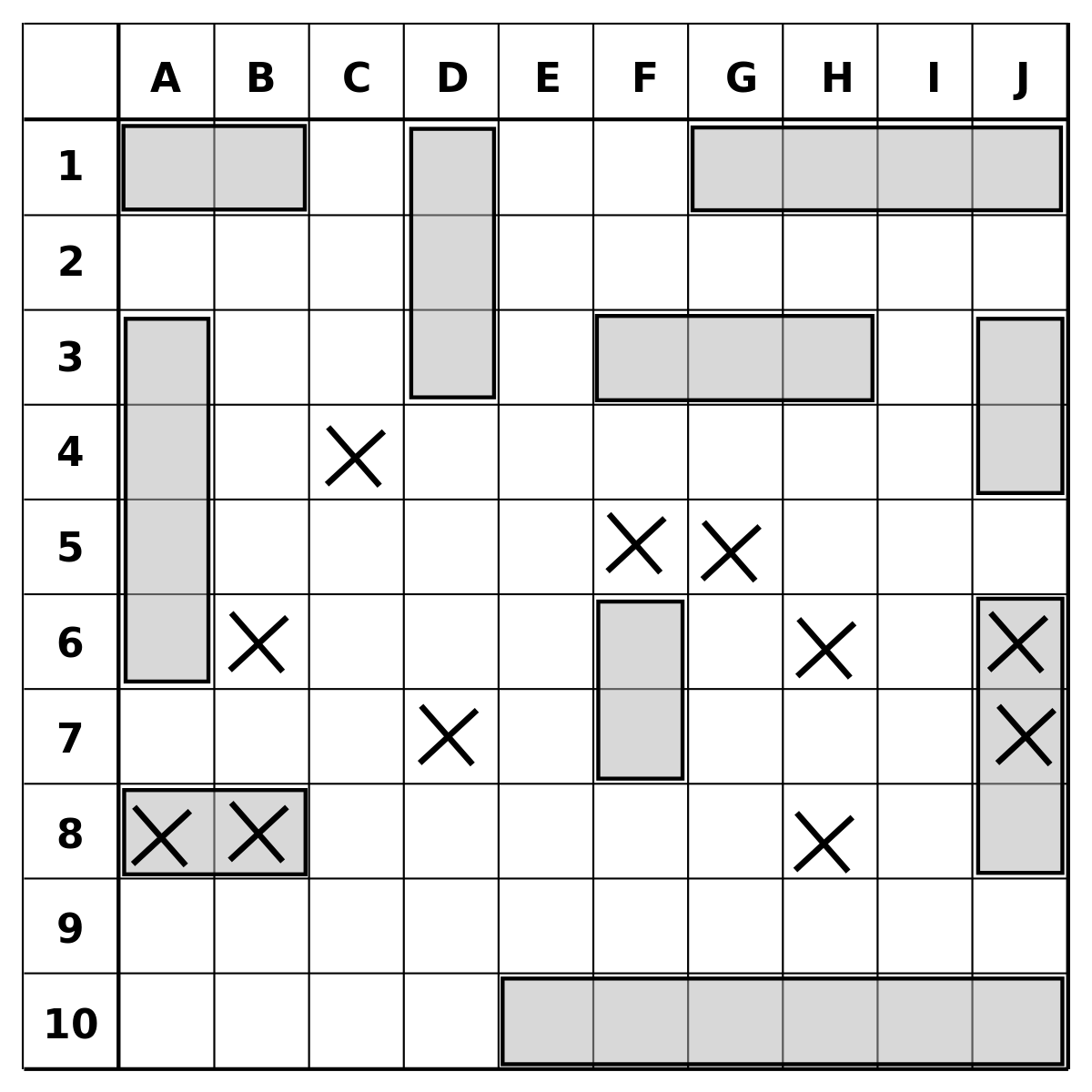

“Mum bought some candies which amount to a multiple of 5 and did not allow the babies to eat them. However, they broke the rules anyway, but each time, each baby can only take a number of candies anywhere from 1 to 4. Assuming that the baby competes for the last candy which is the best candy. Which baby has the strategy to win?”

However, when we rip off the context and generalize the numbers, we obtain the following version:

“A and B take turns to take candies. For each turn, a player would take a number of candies from 1 to n. Assuming that the number of candies is a multiple of (n+1) and the player taking the last candy wins. Which player has a guaranteed strategy to win?”

As you can see, the game is in a relatively generalized form (the number n might be defined as the maximum number of candies a baby can hold, etc.). With this version, we can now define several traits of the game:

- The game is sequential: players are taking turns;

- The game is non-cooperative: players may not form a mutual agreement;

- The game is symmetric: changing players will not affect the overall result;

- The game is evolutionary: players can change strategies according to recent moves;

- The game has perfect information: players have exact records of all the events of the game.

which may specify ways of approaching the problem at hand. As of this example, the solution is quite simple:

The second player would have a guaranteed strategy to win by the following method: Whenever the first player picks a number of candies k between 1 and n, the second player would pick a number of candies equal to (n+1)-k. This method not only makes sure that the second player always has a response to the first player, but also means that the number of candies remaining after the second player’s turn will be a multiple of (n+1). As 0 is a multiple of (n+1), the second player would win.

Several portions of the solution can be attributed as a result of the traits. The strategy of the second player would not be possible without the perfect information trait, as it is dependent on knowing the very previous move of the first player. Moreover, the gameplay itself, and hence the strategy, is only feasible in the presence of the sequential and non-cooperative trait, because if the game had been either simultaneous or cooperative, the result would be very different.

Of course, games with traits different from the ones presented above are abundant. I would elaborate more on some of them, followed by examples in my later posts. For now, math on!